Last week I was able to build a simple Flask template with some NLP functions and a separate Three.js scene. This week I wanted to explore the possibilities further. What kind of visuals would be realistic with Twitter data and this setup?

Looking into custom meshes

Firstly, I spent time studying Three.js resources. Three.js is known for its rich collection of examples, but its documentation can be hard to navigate when looking for specific methods. Luckily there are amazing additional resources, like DiscoverThreeJs by Lewy Blue and a collective effort Three.js Fundamentals.

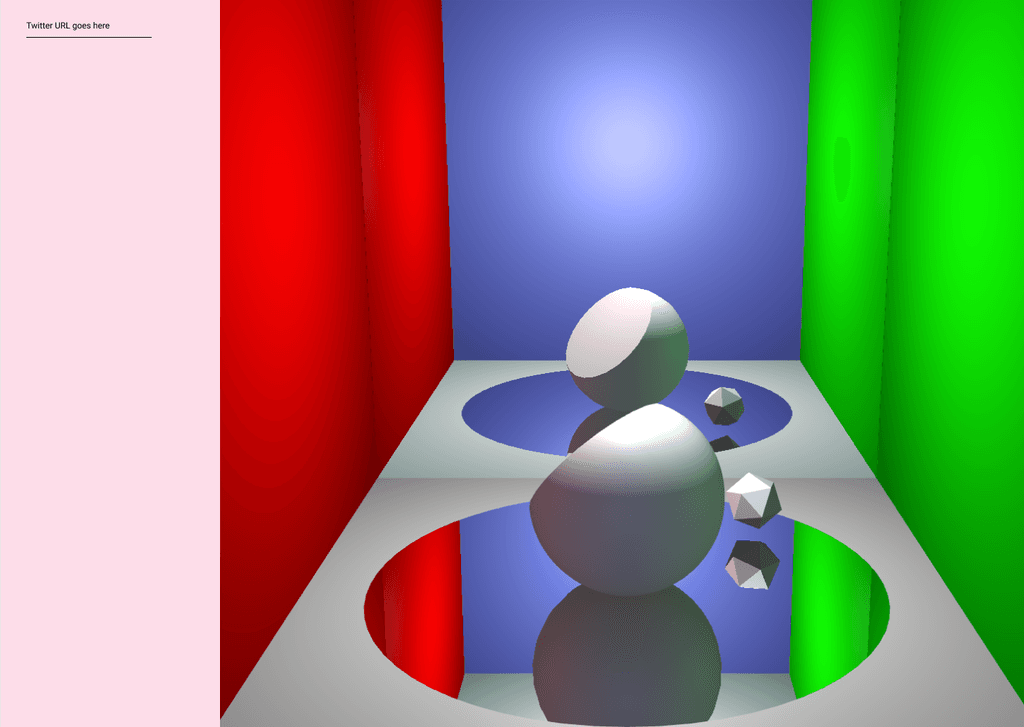

Having mostly studied generative visuals with openFrameworks and Processing, Three.js included quite a steep learning curve. One can render a simple cube or sphere very quickly with 3D primitives, but customising things requires more effort.

In Three.js, one can build a 3D sphere with these both:

new THREE.SphereGeometry();

new THREE.BufferGeometry();

After studying the resources and chatting with Nikolai, it became clear that both are worth exploring in my project. I already know that I want to deform 3D shapes and change their surfaces based on data.

In my Flask app, I was able to scale and rotate a THREE.SphereGeometry based on NLP values. However, it became clear that in order to efficiently deform a sphere with a large amount of vertices once per frame, I would need a vertex shader.

With a BufferGeometry, on the other hand, one can create any geometry that can be built with triangles, but one needs to define vertex positions in order to make a DIY mesh. I didn't have time to explore this option yet, but I'll do that later.

Time for mockups

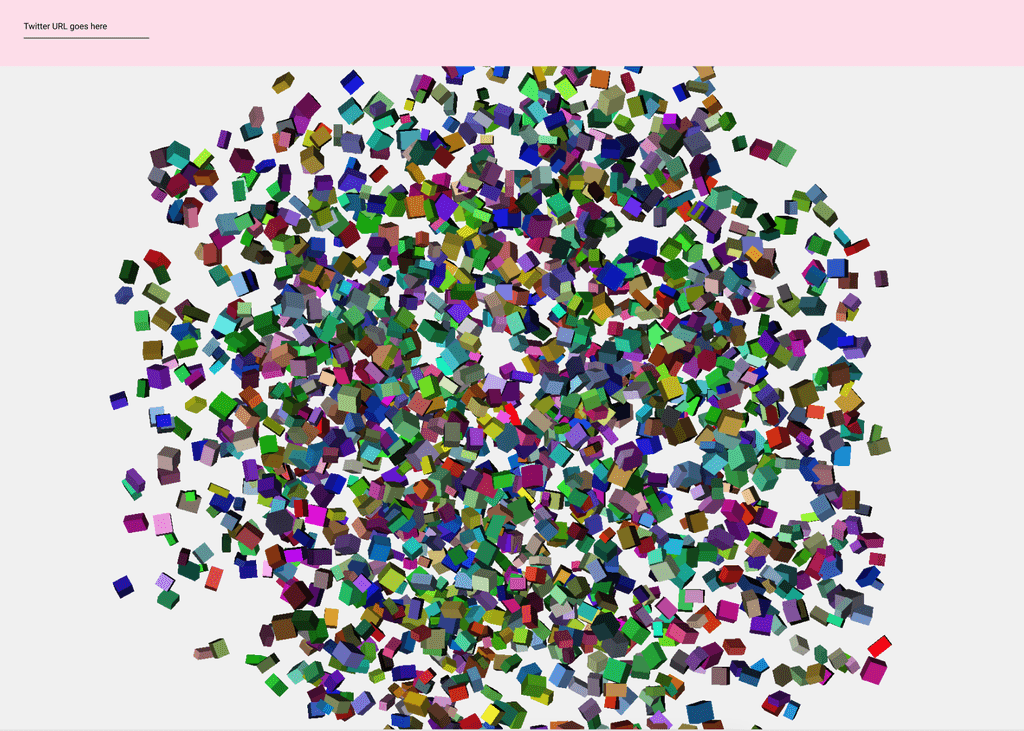

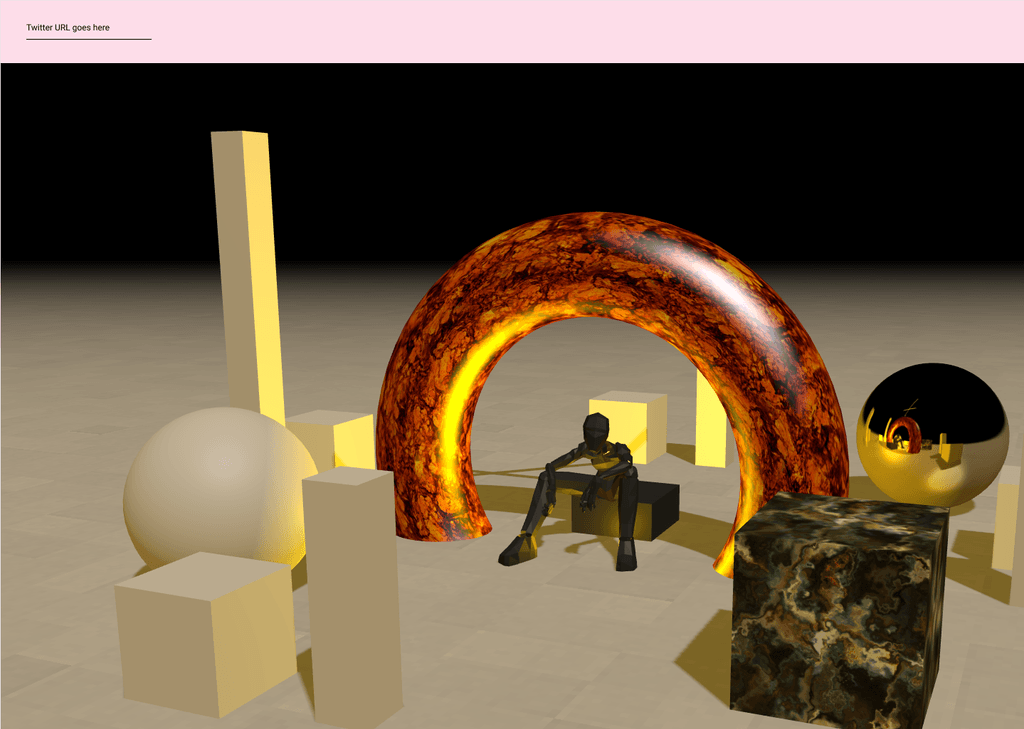

Before diving deeper into meshes, it felt like a good time to work on some mockups. For example, I had not decided yet if I wanted to treat each Twitter account as one text corpus or as separate tweets. This will have a crucial effect on the visual: will it be a space with a few objects, each representing one trait in the whole corpus, or a big bunch of small shapes, each representing one tweet?

I made very simple mockups in Figma, exploring all the possible directions. I would want the MVP to have a very minimal design, with just a field where one can type a Twitter username or Twitter URL and then get a visual beside it.

To illustrate the vibe of the 3D scene, I took some screenshots of Three.js examples to show my colleagues what the library can do:

I shared the mockups with the team and I’m looking forward to getting their feedback. I’m interested in hearing what solutions they find visually interesting and what aspects (sentiment, most common words etc.) they would want to learn about their own Twitter accounts.

Finally I made a small NLP test that proved that Python was the right choice for this project. With Tweepy API and TextBlob, I was able to access a chosen Twitter account and append the sentiment values to a list with relatively few lines of code!

# creating empty list for the results

twitterResultsList = []

for tweet in posts[:199]:

# cleaning each tweet first

cleanedText = cleanTxt(tweet.full_text)

# making TextBlob of each cleaned tweet

tweet_text_sample = TextBlob(cleanedText)

subjectivity = tweet_text_sample.sentiment.subjectivity

polarity = tweet_text_sample.sentiment.polarity

tweetresults = (subjectivity, polarity)

# store each result in the list, each result pair

# can be used to transform one small cube

twitterResultsList.append(tweetresults)